Root Cause Analysis

Root Cause Analysis (RCA) is part of a larger conception of organizational improvement. It originated in manufacturing and engineering in postwar Japan and then expanded to health care (Arnheiter & Greenland, 2008; Silverstein, 2014; Thwink.org, 2014). Its legacy is the work of W. Edward Deming (1986), and it relates to both Total Quality Management, briefly popular in education during the 1980s and early 1990s, and to Improvement Science. Because of the late arrival of RCA in education, reported applications in health care are far more numerous, and the health care literature is a good place to look for detailed information about RCA techniques. In fact, searching three education databases for “root cause analysis” yielded just 100 documents when healthcare education per se was excluded as a search term. By contrast, searching three healthcare databases yielded over 1,500 documents.

RCA is perhaps best characterized as a toolkit of various stand-alone processes to address problems of practice (in hospitals or schools, for instance). RCA techniques can be used both for problem definition and for problem-solving. Each tool in the kit can be taught and learned (perhaps during a professional development workshop) and then applied. Reports of such learning and subsequent use are common in the healthcare literature (Karkhanis & Thompson, 2021). The lesson for educators from the use of the toolkit in healthcare is that application of each RCA technique requires preparation, training, and support.

The RCA Toolkit

RCA is a collection of very specific techniques. These techniques emerged over time in particular organizational contexts, so there is not just one RCA method (Wagner, 2014). In general, though, all help practitioners define or solve small-scale problems to prevent or remedy errors in routine operations. Some education examples for which RCA techniques are well suited include (1) managing lice infestations, (2) improving the efficiency of bus routes, (3) reducing the overuse of the “F” grade, and (4) reducing suspension rates. RCA can help define such problems and thereby point to possible lines of action.

RCA, however, is not sufficiently robust to enable practitioners to resolve the large-scale systemic problems (“wicked problems”) with which communities and educators struggle. Identifying causes of and remedies for inequitable educational outcomes, for instance, requires more than RCA techniques can deliver.

Of course, leadership teams should be working to change conditions that contribute to “wicked problems.” And to address these conditions, a team might start with one of the root-cause techniques (Wagner, 2014). Then, once RCA has helped the team identify one or two causes to explore, team members can use other steps in the Ohio Improvement Process (OIP) to frame inquiry questions, collect data pertinent to those questions, identify promising strategies, learn how to implement those strategies, actually implement the strategies, and monitor progress.

Three examples of RCA techniques follow. Many more exist (e.g., Minnesota Department of Health, 2021; Wilson, 2021). The choice of a technique should match the nature of the problem, at least as it is initially understood. Doing a root cause analysis often will result, however, in a substantial redefinition of the problem (Arnheiter & Greenland, 2008).

Five whys. Why have things gone wrong on the production line? In the 1970s, Toyota elaborated the “Five Whys Analysis.” The number five was whimsy: the number could have been three or 25. The point of the method, however, is to keep a team asking why (e.g., “Why did the bus routing software increase the total route mileage instead of decreasing it?”) until a satisfactory reason is found. Such a sequence is typical in diagnostic procedures, for instance, in the troubleshooting advice provided through help desks and in FAQs.

Barrier analysis (BA). The issue for barrier analysis is behavior change (Kittle, 2017). Barrier Analysis seeks to identify what, for a particular behavior and particular group, is most likely to get in the way of a desirable change (e.g., washing hands carefully and effectively). The originators offer a series of easy-to-use tools. Doing a BA, though, involves considerable work, including structured interviews with a fairly large number of people (e.g., 90). Here, too, one sees a diagnostic sequence. The difference is that instead of looking at what went wrong, it examines what might go wrong, and then plans accordingly.

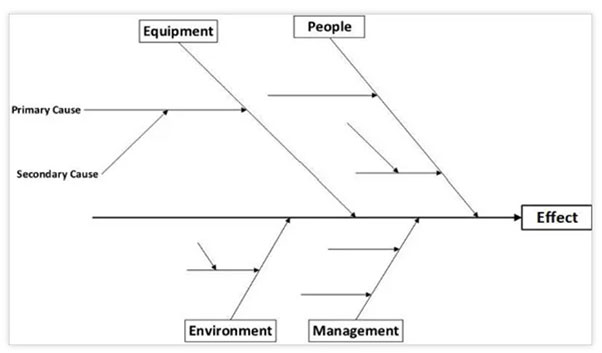

Ishikawa diagram. Kaoru Ishikawa was a leader of the post-war Japanese industrial renaissance, and he was a colleague of Deming. Ishikawa originated the diagram named after him—but colloquially known as a “fishbone” diagram in the US (see Figure 1).

Figure 1. Example of Ishikawa Diagram

Note. Public-domain image from https://www.template.net/business/word-templates/fishbone-diagram-template/

Imagining the use of the Ishikawa diagram in Figure 1, one sees that this technique can get complicated fast. Each contributing category of influence (in this case, equipment, people, environment, and management) can be subdivided endlessly. That process would not be helpful for many leadership teams, because endless complexity is more likely to obscure than to clarify the kinds of problems that educators face.

Typically, the Ishikawa diagram functions to organize a brainstorming session. It is one way to categorize what people regard as possible influences—but it cannot isolate a cause or quantify the varied contributions of several causes. In fact, with most uses of the diagram, the brainstorming process lacks the data and data analysis tools needed actually to identify causes! Note that the statistical analysis method known as path analysis does combine possible causes into one explanatory model, rather like an Ishikawa diagram. Rodgers and Oppenheim (2019) describe another data-heavy example that combines data analysis—Bayesian belief networks—with brainstorming.

Pareto analysis. Unlike the Ishikawa diagram, Pareto Analysis uses simple data about the percentage of occurrences associated with a problem, plotting them on a spreadsheet. It helps people identify the most common problems on the assumption that 80% of problems are the result of 20% of causes (that ratio is the Pareto principle, familiarly known as the 80/20 rule.)

So What?

Additional techniques exist in the RCA toolkit, each one more or less a variation on the others. They are linked together, in fact, by an underlying reality—a root cause, perhaps, of Root Cause Analysis. This underlying reality is the fact that most members of education leadership teams have access to just a few types of data and limited facility with data analysis.

Nevertheless, the use of data is essential when problems are identified, examined, and addressed. PD offerings have struggled to help educators become better data analysts, and effective problem-solving involves even more than that (Love et al., 2008). It involves defining the problem, asking the right questions, gathering the right data, and then analyzing the data carefully to answer questions. RCA can help with some of these steps especially when processes for using data well are already in place.

Cautions about RCA

RCA techniques are relatively easy to teach and for novices to learn (Hand & Siebert, 2016; Wagner, 2014), at least in comparison with such related techniques as regression analysis, path analysis, and other statistical methods. Because of the relative ease of RCA techniques, educators should be cautious when they use them. Three warnings appear next.

RCA is data analysis light. RCA helps relative data-novices organize hunches, experience, and some data (but usually not too much). It helps teams get beyond the chaos of too much data and too little collaboration (James-Ward et al., 2012). A cautious use of RCA helps leadership teams ask the right questions and define problems well. It can build capacity for analysis and critique.

RCA has limits. None of the RCA techniques works to address all problems. The RCA toolkit as a whole is better adapted to small- than to large-footprint problems. Users must also decide which technique matches the problem they’re dealing with, and—even more important—how to cut a larger problem down to size for using a chosen RCA technique.

RCA sounds impressive—root causes. But it’s important to note that RCA cannot actually isolate causes with much certainty (Srivastava & Kropf, 2020). Still, RCA has a track record, at least in healthcare. With good preparation and appropriate assistance, it can also help teams of educators confront issues of practice that they might otherwise leave unexamined (e.g., Lundberg & Dangel, 2019).

Talking about root causes is challenging. Serious attempts to surface “root causes”—fundamentally influential circumstances—are likely to offend some team members and to be uncomfortable for many (Arnheiter & Greenland, 2008). With high organizational stakes, diverse team membership, or challenging problems, the design and use of an RCA requires a facilitator who can manage complex processes, orchestrate difficult conversations, and encourage the involvement of all participants (Stone et al., 2010).

RCA and the Spirit of Inquiry

The Ohio Improvement Process (OIP) expects that collaborative leadership teams (TBTs, BLTs, and DLTs) will embrace the spirit of inquiry and address challenging problems: often and regularly. RCA offers a helpful toolkit in this context. It’s only a start, however. Teams must follow up with data collection, reflection, planning, collaborative learning, and more action.

Like the Plan-Do-Study-Act (PDSA) cycles that Improvement Science recommends, RCA techniques work well when they are intentionally confined to the sorts of specific problems they were engineered to take on. As noted above, OIP offers a broader set of tools for examining and finding ways to remedy the very challenging (“wicked”) problems that school districts continue to confront, even after using tools like RCA to fix errors in routine processes.

References

Arnheiter, E. D., & Greenland, J. E. (2008). Looking for root cause: A comparative analysis. TQM Journal, 20(1), 18–30. https://doi.org/10.1108/09544780810842875

Deming, W. E. (1986). Out of the crisis. Massachusetts Institute of Technology, Center for Advanced Engineering Study.

Hand, M. W., & Seibert, S. A. (2016). Linking root cause analysis to practice using problem-based learning. Nurse Educator, 41(5), 225–227. https://doi.org/10.1097/NNE.0000000000000256

James-Ward, C., Frey, N., & Fisher, D. (2012). Root cause analysis. Principal Leadership, 13(2), 59–61.

Karkhanis, A. J., & Thompson, J. M. (2021). Improving the effectiveness of root cause analysis in hospitals. Hospital Topics, 99(1), 1–14. https://doi.org/10.1080/00185868.2020.1824137

Kittle, B. L. (2017). A practical guide to conducting a barrier analysis. Helen Keller International. https://pdf.usaid.gov/pdf_docs/PA00JMZW.pdf

Love, N., Stiles, K. E., Mundry, S., & DiRanna, K. (2008). The data coach’s guide to improving learning for all students: Unleashing the power of collaborative inquiry. Corwin.

Lundberg, A., & Dangel, R. F. (2019). Using root cause analysis and occupational safety research to prevent child sexual abuse in schools. Journal of Child Sexual Abuse, 28(2), 187–199. https://doi.org/10.1080/10538712.2018.1494238

Minnesota Department of Health. (2021). Root cause analysis toolkit. https://www.health.state.mn.us/facilities/patientsafety/adverseevents/toolkit/

Rodgers, M., & Oppenheim, R. (2019). Ishikawa diagrams and Bayesian belief networks for continuous improvement applications. TQM Journal, 31(3), 294–318. https://doi.org/10.1108/TQM-11-2018-0184

Silverstein, R. (2014). Root cause analysis webinar: Q&A with Roni Silverstein. Regional Educational Laboratory Mid-Atlantic. http://files.eric.ed.gov/fulltext/ED560799.pdf

Landsittel, D., Srivastava, A., & Kropf, K. (2020). A narrative review of methods for causal inference and associated educational resources. Quality Management in Health Care, 29(4), 260–269. https://doi.org/10.1097/QMH.0000000000000276

Stone, D., Patton, B., Heen, S., & Fisher, R. (2010). Difficult conversations: How to discuss what matters most. Penguin Books.

Thwink.org. (2014). Root cause analysis: How it works at Thwink.org. https://www.thwink.org/sustain/publications/booklets/04_RCA_At_Thwink/RCA_At_Thwink_Pages.pdf

Wagner, T. P. (2014). Using root cause analysis in public policy pedagogy. Journal of Public Affairs Education, 20(3), 429–440. https://doi.org/10.1080/15236803.2014.12001797

Wilson, B. (2021). Root cause analysis 101. http://www.bill-wilson.net/root-cause-analysis/rca-article-guide